Why A/B Testing Matters at Every Stage — From Startups to Global Platforms

In the technology world, few ideas are repeated as often as the mantra popularized by Y Combinator: “Build something people want.” At first glance, the phrase sounds simple. If users want your product, success should follow. But in practice, the challenge is far more nuanced.

A more operationally useful rephrasing might be:

“Build something you can convince people outside your immediate circle that they can achieve value in.”

This reframing emphasizes measurable validation over intuition. And the most reliable mechanism for that validation—across startups and enterprise-scale organizations alike—is A/B testing.

This case study explores why A/B testing is mission-critical not just for scrappy startups but also for scaled giants like Amazon and Netflix, why it is often overlooked, and why implementing it effectively becomes more complex as companies grow.

Part I: The Startup Illusion — Why Early Teams Think They Don’t Need A/B Testing

Early-stage founders often operate on speed, instinct, and limited data.

They may believe:

• “We don’t have enough traffic.”

• “We already know our users.”

• “We need to ship fast.”

• “Testing slows us down.”

Ironically, this is precisely when A/B testing is most important.

The Founder Bias Problem

In early stages, most feedback comes from:

• Friends

• Early believers

• Investors

• Internal team members

These audiences are not representative. They are biased toward optimism. They want the product to succeed.

A/B testing introduces a neutral judge: behavior.

Instead of asking, “Do you like this?”, you ask:

• Do more users sign up?

• Do more users activate?

• Do they retain?

• Do they convert?

The reframed mantra applies here:

You are not validating whether you think it’s useful.

You are validating whether people outside your circle behave as if it is valuable.

Without testing, founders confuse politeness for product-market fit.

Part II: Case Example — Early-Stage SaaS Platform

Consider a hypothetical B2B SaaS startup offering AI-powered analytics dashboards.

Initial Hypothesis

The team believes:

• “Users want advanced AI explanations front and center.”

• They design a homepage emphasizing technical capability.

Traffic comes in from paid ads. Signups are low.

Instead of redesigning everything, they test:

Version A: AI-heavy messaging

Version B: Clear ROI-driven messaging (“Reduce reporting time by 40%”)

Result: Version B increases conversions by 27%.

Insight: Users do not initially care about technical depth. They care about outcomes.

Without A/B testing, the company might have:

• Invested heavily in feature complexity

• Built more technical messaging

• Burned capital on scaling the wrong positioning

Testing does not slow learning. It accelerates it.

Part III: Why A/B Testing Becomes Even More Important at Scale

Many assume that once a company reaches massive scale, intuition improves and testing becomes less critical.

The opposite is true.

1. Small Improvements = Massive Impact

At companies like Amazon, a 1% conversion increase can mean hundreds of millions in revenue.

At scale:

• Minor friction compounds.

• Minor improvements compound.

• Minor errors scale catastrophically.

This is why companies such as Google run thousands of experiments annually.

2. Complexity Grows Exponentially

As organizations grow:

• More teams build features.

• More stakeholders influence decisions.

• More politics shape roadmaps.

A/B testing becomes a neutral arbiter.

Instead of:

• “The VP likes this design.”

• “Marketing prefers this headline.”

• “Product thinks this flow is better.”

The question becomes:

• What does user behavior say?

Data reduces internal politics.

3. Platform Risk Increases

A startup making a poor product decision risks stagnation.

A scaled platform risks:

• Revenue decline

• Stock drops

• PR crises

• Customer churn

• Regulatory scrutiny

Large platforms cannot rely on instinct.

Part IV: Why A/B Testing Is Often Overlooked

Despite its importance, A/B testing is frequently underutilized.

1. False Confidence

Teams believe:

• “We already know our customer.”

• “We have user research.”

• “Leadership has experience.”

Experience is not experimentation.

Markets shift. User expectations evolve. Competitive landscapes change.

What worked last year may not work today.

2. Engineering Friction

Testing requires:

• Instrumentation

• Analytics

• Experiment infrastructure

• Data analysis rigor

Early-stage teams often lack these capabilities.

Scaled companies face different problems:

• Legacy systems

• Monolithic codebases

• Cross-team dependencies

• Risk aversion

Testing sounds easy conceptually but can be operationally complex.

3. Vanity Metrics

Companies sometimes track:

• Clicks

• Time on page

• Views

But fail to measure:

• Activation

• Retention

• Revenue impact

• Long-term user value

Poor metric selection leads to misleading conclusions.

Part V: Implementation Challenges by Platform Type

A/B testing is not “one size fits all.” The difficulty depends heavily on platform structure.

1. Web Applications

Relatively easier:

• Server-side experiments

• Feature flags

• Split traffic routing

However:

• SEO implications must be considered.

• Caching layers complicate variant delivery.

• Analytics must avoid contamination.

2. Mobile Apps

More complex:

• App store approvals slow iteration.

• Version fragmentation splits user cohorts.

• Tracking is impacted by privacy changes.

Testing in mobile environments often requires:

• Remote configuration

• Experiment toggles

• Careful rollout strategies

3. Marketplaces

Two-sided marketplaces (buyers and sellers) introduce:

• Network effects

• Cross-side interference

Changing buyer flow may affect seller supply behavior.

Testing must account for:

• Ecosystem balance

• Spillover effects

• Long-term equilibrium

4. Algorithmic Platforms

Companies like Netflix test recommendation algorithms constantly.

But experimentation here must consider:

• Feedback loops

• Content diversity

• Long-term engagement

• Algorithmic bias

Short-term click increases can reduce long-term satisfaction.

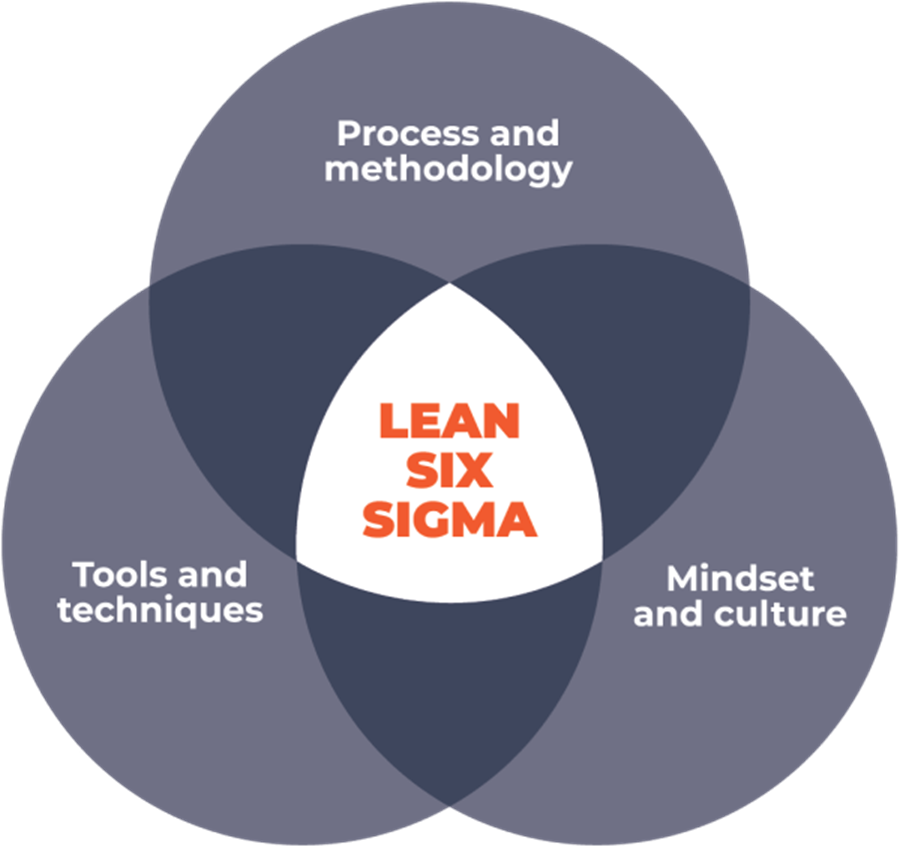

Part VI: The Cultural Component — Experimentation as a Mindset

A/B testing is not just a tactic. It is a philosophy.

At experimentation-driven organizations:

• Ideas are hypotheses.

• Opinions are secondary.

• Failures are data.

• Wins are incremental.

This mindset protects companies from ego-driven product decisions.

It aligns perfectly with the reframed mantra:

Build something you can convince people outside your immediate circle that they can achieve value in.

Convincing is not done through persuasion.

It is done through behavior measurement.

If strangers:

• Sign up,

• Return,

• Pay,

• Refer others,

They are signaling value.

Testing quantifies that signal.

Part VII: Long-Term vs Short-Term Optimization

One of the biggest dangers in A/B testing—especially at scale—is short-term bias.

For example:

• Aggressive popups may increase immediate signups.

• Push notifications may increase short-term engagement.

But they may:

• Increase churn

• Decrease brand trust

• Reduce lifetime value

Advanced experimentation cultures track:

• Cohort retention

• Long-term revenue

• Customer satisfaction

• Brand impact

Testing must align with long-term strategy.

Part VIII: When Not Testing Is More Dangerous Than Testing

Consider two scenarios:

Company A (No Testing Culture)

• Relies on executive instinct

• Launches major redesign

• Conversion drops 15%

• Months lost diagnosing cause

Company B (Testing Culture)

• Rolls out redesign to 10%

• Detects 3% drop

• Halts rollout

• Iterates

Company B preserves capital, brand, and morale.

The risk of not testing grows as:

• Traffic increases

• Customer base expands

• Revenue concentration rises

Part IX: Organizational Scaling and Experiment Velocity

At massive scale, the challenge shifts from “Should we test?” to “How fast can we test?”

Key factors include:

• Experiment velocity (number of tests per month)

• Statistical rigor

• Cross-functional alignment

• Experiment documentation

Companies like Google institutionalized testing frameworks because growth depends not on singular genius ideas but on thousands of incremental improvements.

Compounded micro-optimizations drive macro results.

Part X: The Psychological Barrier

Why do teams resist A/B testing?

Because it threatens ego.

Testing means:

• Your idea might fail.

• Leadership might be wrong.

• Design instincts may not perform.

But this is precisely why it is powerful.

It shifts success from:

• Charisma

• Authority

• Seniority

To:

• Evidence

• Behavior

• Measurable value

Part XI: Returning to the Core Philosophy

“Build something people want” is inspirational.

But in practice, it is incomplete.

People often:

• Say they want things they won’t use.

• Claim they value features they ignore.

• Express enthusiasm without behavior.

A stronger operational philosophy is:

Build something you can convince people outside your immediate circle that they can achieve value in—and prove it through behavior.

Convincing here means:

• They choose it.

• They return to it.

• They pay for it.

• They recommend it.

A/B testing is the mechanism that reveals whether that conviction exists.

Conclusion: A/B Testing Is Not Optional

For startups:

• It prevents building in a vacuum.

• It validates product-market fit.

• It conserves capital.

For scaled enterprises:

• It protects revenue.

• It mitigates risk.

• It optimizes marginal gains at massive scale.

• It reduces political bias.

The larger a company becomes, the more dangerous assumptions are.

Experimentation is not about minor UI tweaks.

It is about building an organization that replaces opinion with evidence.

Whether you are:

• A two-person startup,

• A venture-backed SaaS platform,

• Or a global platform touching billions,

The principle remains the same:

You are not building for yourself.

You are building for people who owe you nothing.

And the only honest way to know if they find value—beyond your immediate circle—is to test.

5041

5041