The Cisco vs. Nvidia Clash: Networks vs. Accelerated Computing

1. Different Origins, Same Battlefield

Cisco and Nvidia started in very different worlds:

• Cisco (founded 1984) built its dominance on networking hardware — routers, switches, and the infrastructure that moves data across the internet.

• Nvidia (founded 1993) focused on graphics processing units (GPUs), later redefining itself as a leader in parallel computing and AI acceleration.

For years, the two companies barely competed. But as data centers, cloud computing, and AI workloads exploded, their paths collided.

2. The First Friction: Data Center Control (2000s–2010s)

As enterprises moved workloads into data centers:

• Cisco pushed deeper into servers and unified computing (UCS)

• Nvidia expanded GPUs beyond graphics into general-purpose computing

The clash emerged around who controls data center architecture:

• Cisco emphasized network-centric design

• Nvidia promoted compute- and accelerator-centric design

This tension became more pronounced as high-performance computing (HPC) and AI workloads demanded low-latency, high-bandwidth networking tightly integrated with GPUs.

3. InfiniBand vs. Ethernet: The Core Technical Battle

The most direct clash centers on networking technology:

Nvidia’s approach

• Acquired Mellanox in 2020

• Pushed InfiniBand, a high-speed, low-latency networking standard

• Optimized for AI training, supercomputers, and hyperscale data centers

Cisco’s approach

• Defended Ethernet-based networking

• Promoted open standards and software-defined networking

• Argued Ethernet could scale to meet AI demands without proprietary lock-in

? Key tension:

• Nvidia favors tight integration of GPUs + InfiniBand + software stack

• Cisco favors open, modular, Ethernet-based networks

This became a philosophical and commercial clash over openness vs. vertical integration.

4. AI Changes Everything (Late 2010s–2020s)

With the AI boom:

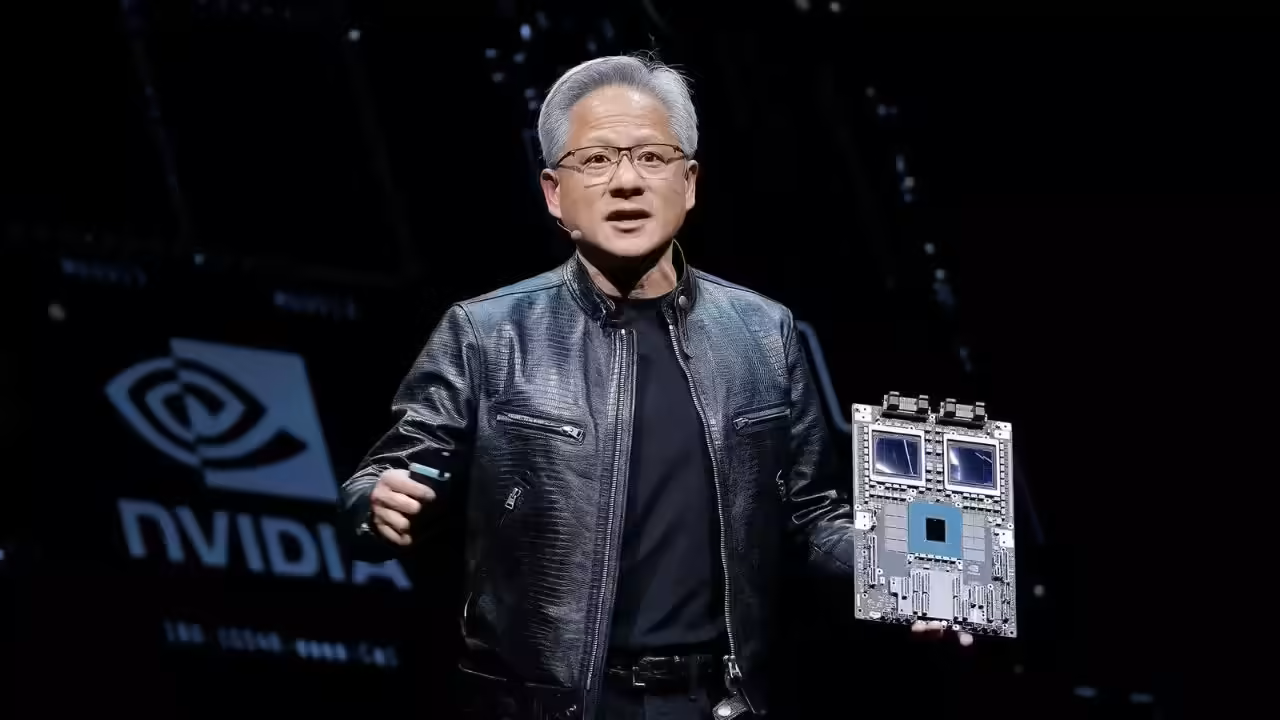

• Nvidia emerged as the dominant AI hardware and platform company

• Networking became a critical bottleneck for AI clusters

• Nvidia positioned itself not just as a chip company, but as a full data center platform provider

Nvidia began offering:

• GPUs

• Networking (InfiniBand & Ethernet)

• DPUs (data processing units)

• AI software frameworks

This directly encroached on Cisco’s traditional territory.

Cisco, in response:

• Emphasized AI-ready Ethernet

• Invested heavily in Silicon One, its own networking silicon

• Positioned itself as the neutral infrastructure layer beneath AI systems

5. The Competitive Undercurrent: Who Owns the AI Data Center?

At the heart of the clash is control:

• Nvidia’s vision:

A vertically integrated AI stack — hardware, networking, and software optimized together.

• Cisco’s vision:

A heterogeneous, vendor-neutral network where AI systems plug in without lock-in.

This rivalry matters because:

• AI data centers are among the most expensive and strategic infrastructure investments

• Whoever controls networking standards influences long-term customer dependency

6. Not Just Rivals — Sometimes Partners

Despite competition, Cisco and Nvidia sometimes collaborate:

• Nvidia GPUs run over Ethernet networks

• Cisco gear is used in data centers that deploy Nvidia accelerators

This makes the relationship competitive but interdependent, common in modern tech ecosystems.

7. Where Things Stand Today

By the mid-2020s:

• Nvidia dominates AI compute and high-performance networking

• Cisco remains a giant in enterprise and internet-scale networking

• The clash has shifted from products to architectural control of AI infrastructure

Unlike classic tech wars, this is not a courtroom battle — it’s a slow, structural competition shaping how the internet and AI are built.

In Summary

The Cisco–Nvidia clash unfolded as:

• A technical battle: Ethernet vs. InfiniBand

• A strategic battle: Open networking vs. vertically integrated AI platforms

• A market battle: Who defines the future of AI data centers

Cisco defends the network.

Nvidia redefines the data center around AI.

Both are winning — but in very different ways.

6004

6004